Threats to credible research: Where are we at?

25/05/2026

Licence

This work was originally created by Felix Schönbrodt under a CC-BY 4.0 Creative Commons Attribution 4.0 International License. This current work by Sarah von Grebmer zu Wolfsthurn, Malika Ihle and Felix Schönbrodt is licensed under a CC-BY-SA 4.0 Creative Commons Attribution 4.0 International SA License. It permits unrestricted re-use, distribution, and reproduction in any medium, provided the original work is properly cited. If you remix, transform, or build upon the material, you must distribute your contributions under the same license as the original.

Contribution statement

Creator: Von Grebmer zu Wolfsthurn, Sarah (![]() 0000-0002-6413-3895)

0000-0002-6413-3895)

Reviewer: Schönbrodt, Felix (![]() 0000-0002-8282-3910)

0000-0002-8282-3910)

Consultant: Ihle, Malika (![]() 0000-0002-3242-5981)

0000-0002-3242-5981)

Prerequisites

Important

Before completing this submodule, please carefully read about the prerequisites.

| Prerequisite | Description | Link/Where to find it |

|---|---|---|

| UNESCO Recommendations on Open Science | Recommended reading: pp 6-19 | Download Link |

Threats to credible research - Intro Survey

Survey

Based on your experience so far, how would you currently rate your trust in published scientific findings on a scale from 1 - 5?

- 1 = trusting virtually none of the findings

- 2 = trusting only some findings

- 3 = trusting about half of the findings

- 4 = trusting the majority of the findings

- 5 = trusting virtually all findings

Survey

Based on your experience so far, which challenges to research trustworthiness do you see? Name a few keywords.

Display Wordcloud answer.

Survey

What is your level of familiarity with Open Research practices in general (e.g., basic concepts, terminology, or tools)?

I am unfamiliar with the concept of Open Research practices.

I have heard of them but I would not know how they apply to my work.

I have basic understanding and experience with Open Research practices in my own work/research/studies.

I am very familiar with Open Research practices and routinely apply them in my daily work/research/study routines.

Discussion of survey results

What do we see in the results?

Your current understanding of key terms (6 min)

Think - Pair - Share

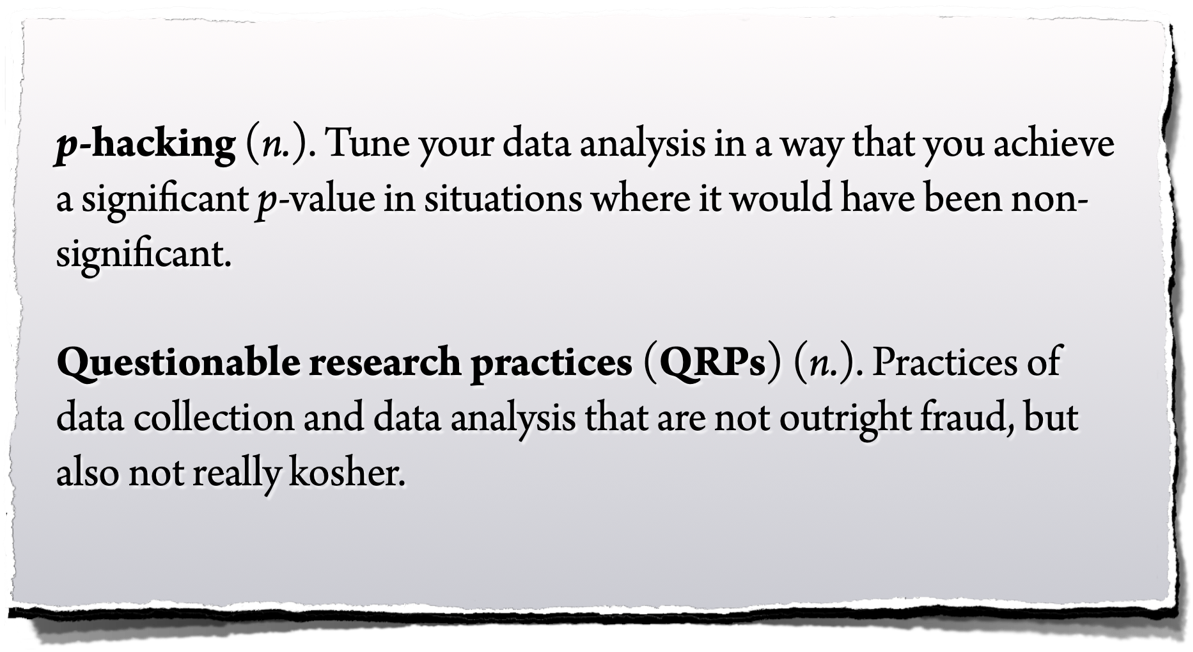

- Think (2 min): For yourself: Write down a definition for those key terms: Replicability, Replication, Reproduction, p-hacking

(without internet search - your current understanding) - Pair (2 min): Discuss your definitions with your neighbour

- Share (2 min): Share your definitions with the larger group

Note - this is not a test!

These terms probably have not been covered in your courses yet. We just want to see what your current understanding of these terms is.

Covered in this session

- Do people trust in science?

- Should people trust in science? Replicability and reproducibilty

- Threats to replicability: p-hacking, errors, and biases in research

Learning goals

By the end of this session, learners will be able to:

- Define and distinguish key terms related to research, including research cycle, trust in research, replicability, reproducibility, and open research

- Recognize different types of challenges in research: research biases, statistical insecurities, errors and where they can arise from

- Analyze research scenarios to identify potential research biases and questionable research practices

- Reflect on the current threats for research and on the need for alternative approaches to conducting research

Do people trust in research?

What is public “trust” in research?

“Society trusts that scientific research results are an honest and accurate reflection of a researcher’s work.” (Committee on Science, Engineering and Public Policy 2009: ix)

“The public must be able to trust the science and scientific process informing public policy decisions.” (Obama 2009)

Resnik, D. B. (2011). Scientific research and the public trust. Science and engineering ethics, 17(3), 399-409.

Measuring trust in research

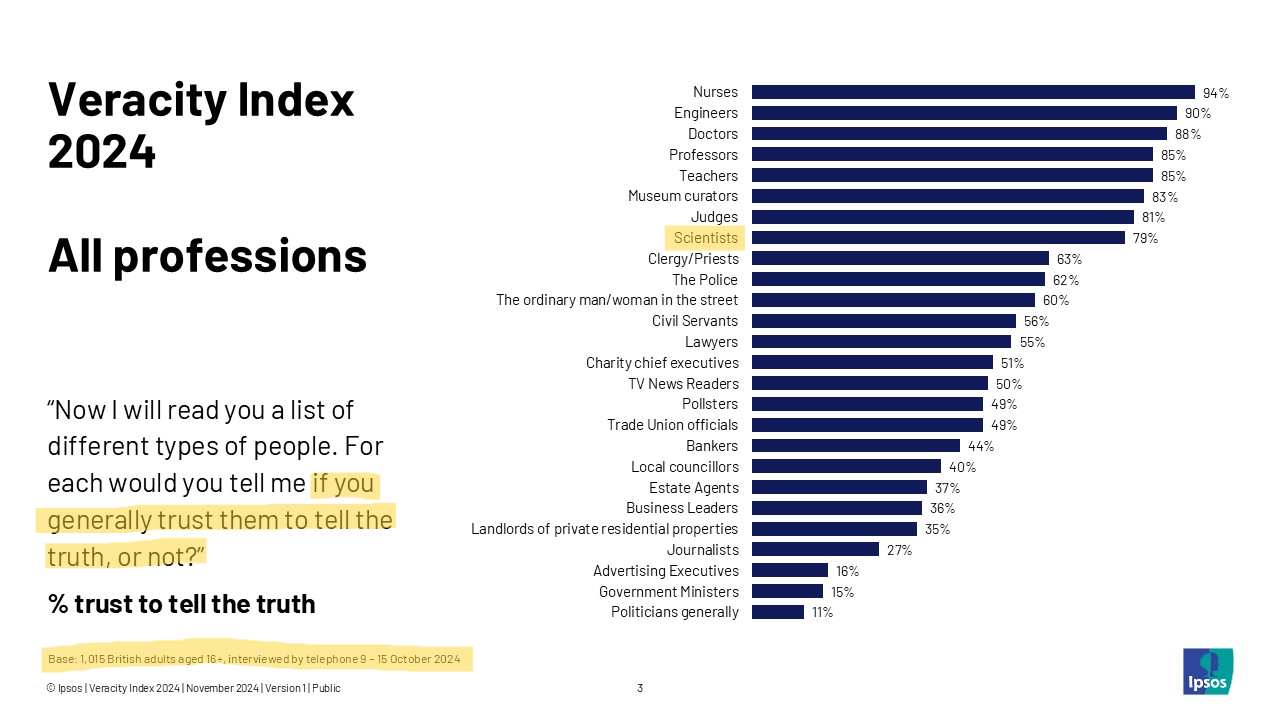

Probe your prejudices: In which profession do you have the highest trust to tell the truth? And the lowest?

Data from 1,015 UK adults, 2024

Sources: Veracity Index 2024, Ipsos; Seyd, B. (2025). What is trust (in science and scientists) and is it in crisis?. Current Opinion in Psychology, 67. https://doi.org/10.1016/j.copsyc.2025.102201

Public trust in research

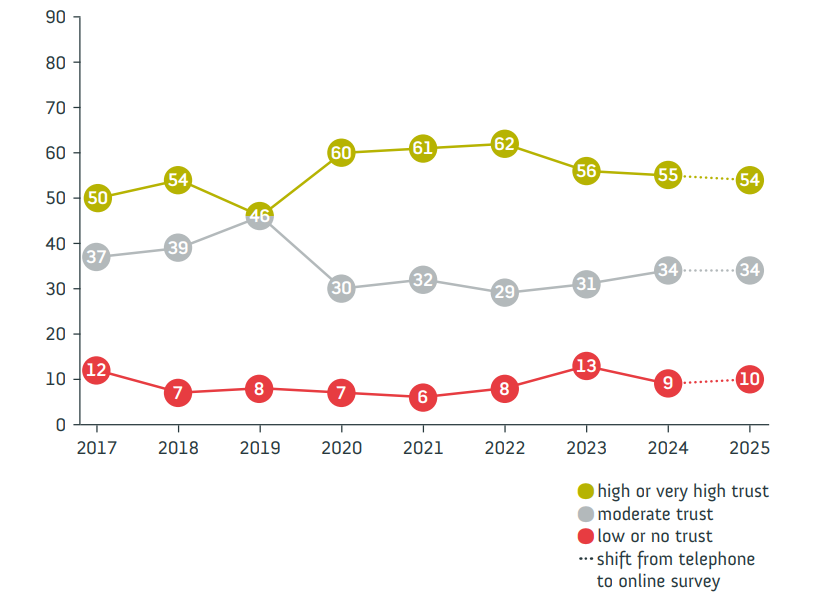

German survey “Wissenschaftsbarometer”

“Wie sehr vertrauen Sie in Wissenschaft und Forschung?”

Source: Wissenschaft im Dialog/Verian: www.sciencebarometer.com. Minimum of 1,000 respondents in each survey wave.

Take aways from multiple international studies

- Trust in scientists remains relatively high in many countries

- Overall trust levels in research remain stable

- But: public skepticism about scientists’ integrity and transparency

Seyd, B. (2025). What is trust (in science and scientists) and is it in crisis?. Current Opinion in Psychology, 67. https://doi.org/10.1016/j.copsyc.2025.102201

The used car dealer (10 min)

aka. “Trust in a situation with asymmetric information”

- Form two groups: the buyers and the dealers.

- Given the bad reputation of car dealers:

- Buyers: What strategies could you use to ensure you are making a trustworthy purchase?

- Dealers: What would convince buyers that you are not one of the bad apples and your car has really good quality?

- Discuss in groups; present your strategies

Inspired by Vazire, S. (2017). Quality Uncertainty Erodes Trust in Science. Collabra: Psychology, 3(1), 1. https://doi.org/10.1525/collabra.74. Images from ChatGPT.

Used cars vs. science

- Parallel in science: product = manuscript; seller = author; buyer = reader

“When there are asymmetries in the information that the seller and the buyer have, the buyers cannot be certain about the quality of the products”

“by keeping vital information private – the raw data, the original design and analysis plan, the exploratory analyses that were conducted along the way to the final analysis authors are hiding valuable information and preventing consumers of their manuscript from being certain about its quality. Just like sellers of used cars keep things […] from the buyers.”

“For many non-scientists, learning that transparency is not the norm in science comes as a surprise. […] it must seem obvious that scientists should be held to a higher standard than used car salespeople.”

Quotes from Vazire, S. (2017).

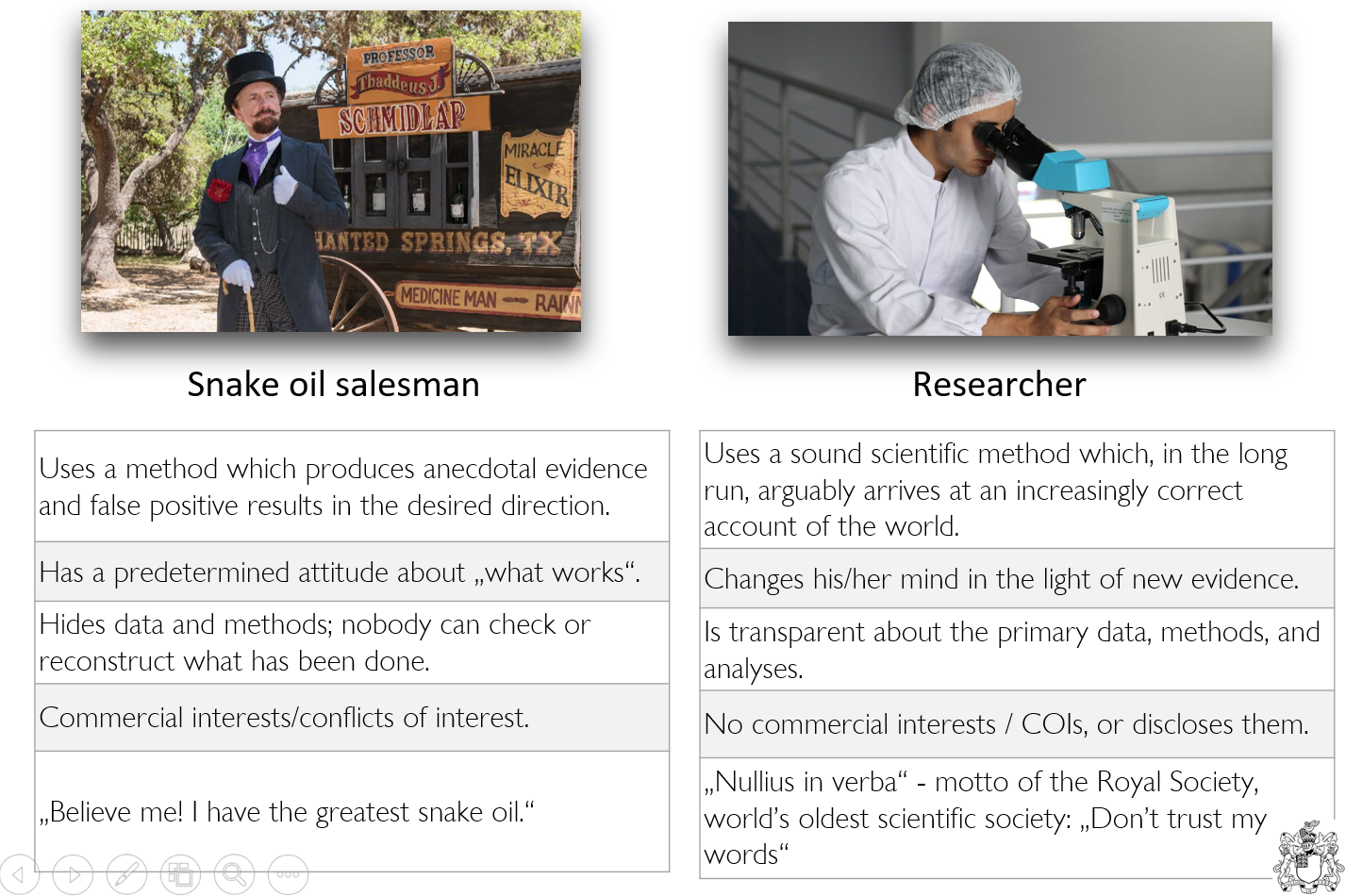

A stark contrast?

Nullius in verba (“take nobody’s word for it”)

The motto of the Royal Society, the oldest scientific society in the world

Royal Society logo by MostEpic - Own work, CC BY-SA 4.0, Link; Car dealer by ChatGPT.

Should we trust in science?

Definitions of key terms

Replicability and reproducibility

- Replicability: The extent to which design, implementation, analysis, and reporting of a study enable a third party to repeat the study and assess its findings.

- Replicability is a property of the original study - it makes no statement about whether a replication has been attempted yet or whether that was successful or not.

- Conceived as a continuum (hence the extent of replicability)

- Replication: A study that repeats all or part of another study including new data collection and allows researchers to compare their findings. Can be successful or not successful.

- Reproduction: The act of re-computing some numerical results using the original data (with or without the original code)

- Reproducibility: The extent to which the results of an original study agree with those of replication or reproductionstudies.

Definitions of Replicability and Replication are from the iRISE glossary v2 by Voelkl et al. (2025). Note that no consensus about the meaning of these words exists - they are used differently in different disciplines.

Definitions of key terms

Prototypes of replication studies

- exact/direct replication: (Virtually) Everything is inherited from the original study except the sample itself

- close replication: Similar to direct replication, but with some (necessary) modifications to the methods or procedures; e.g. translations

- conceptual replication: The same substantive research question is tested, but with different operationalizations

- replication and extension: Doing a direct replication and adding new elements (also called “direct+” replication)

Note: The terminology around replication varies between fields, and the boundaries between these types are blurry.

Definitions of key terms

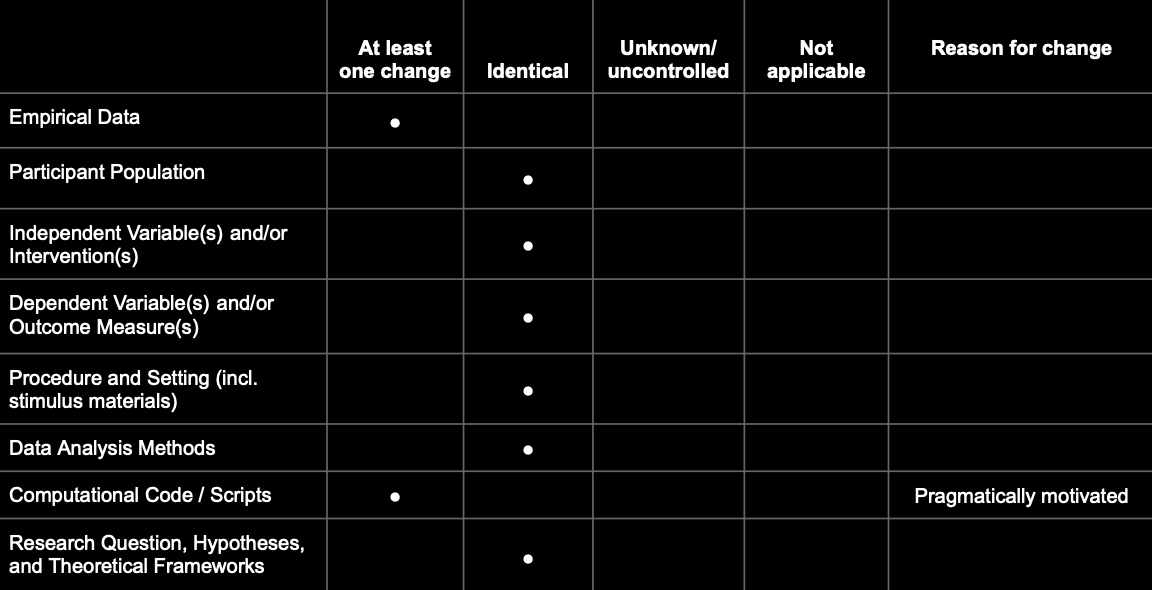

Better approach: Define exactly what changed/stayed identical

A prototypical direct replication:

Definitions of key terms

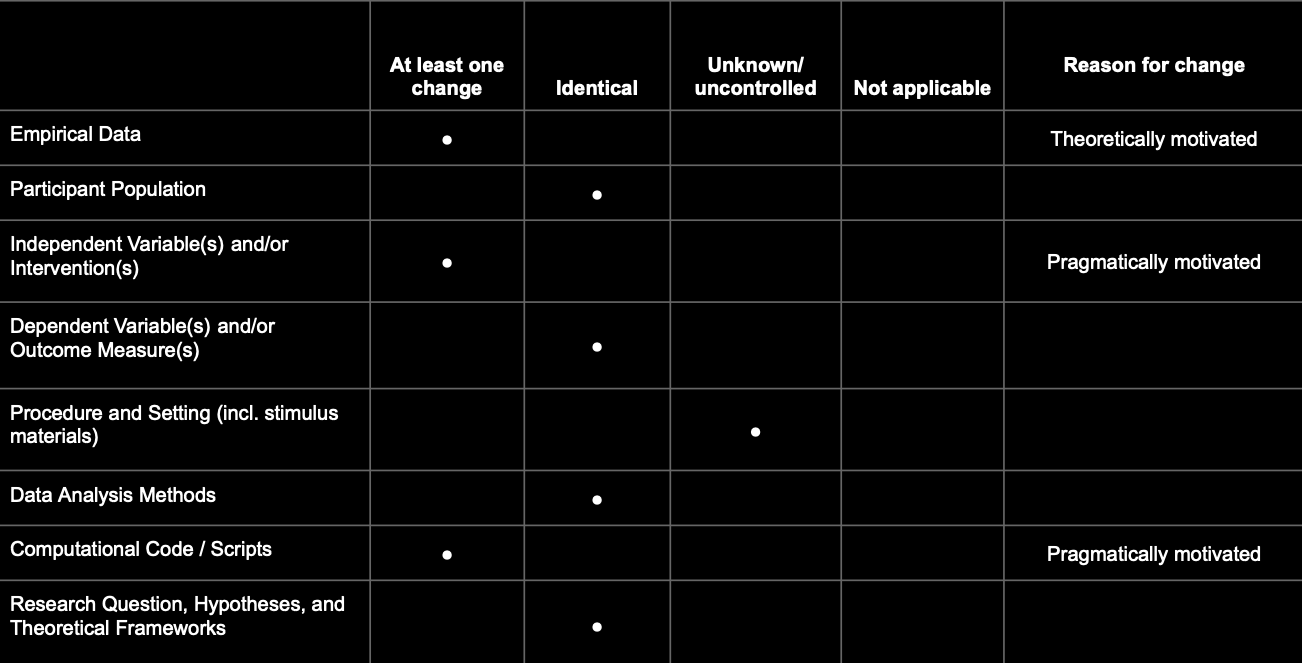

Better approach: Define exactly what changed/stayed identical

A realistic example:

The qualifiers “direct”, “conceptual”, “close” etc. replications are prototypical configurations of this matrix.

Definitions of key terms

p-hacking and QRPs

Replicability and reproducibility

Additional exercises

Want to practice how to distinguish the two? Skip to the end of the slides for additional exercises on replicability vs. reproducibility.

Reproducibility Project:Psychology (RP:P, 2015)

The first large-scale replication project in the field

- Close/exact replications of 100 studies from 3 top journals from psychology; aiming for high power (larger sample sizes than original studies)

- Different ways to judge replication success: evaluated based on effect sizes, p-values, subjective assessment of replication teams

- Contacted original study authors when necessary

- Note: With today’s understanding of the terms, the project should have been called “Replicability Project:Psychology”

TODO: Add new slide with results. Remove the callout box.

What did they find?

Large portion of replications produced weaker evidence for the original findings despite using materials provided by the original authors.

Open Science Collaboration. (2015). Estimating the reproducibility of psychological science. Science, 349(6251), aac4716.

Reproducibility Project:Psychology (RP:P, 2015)

Results

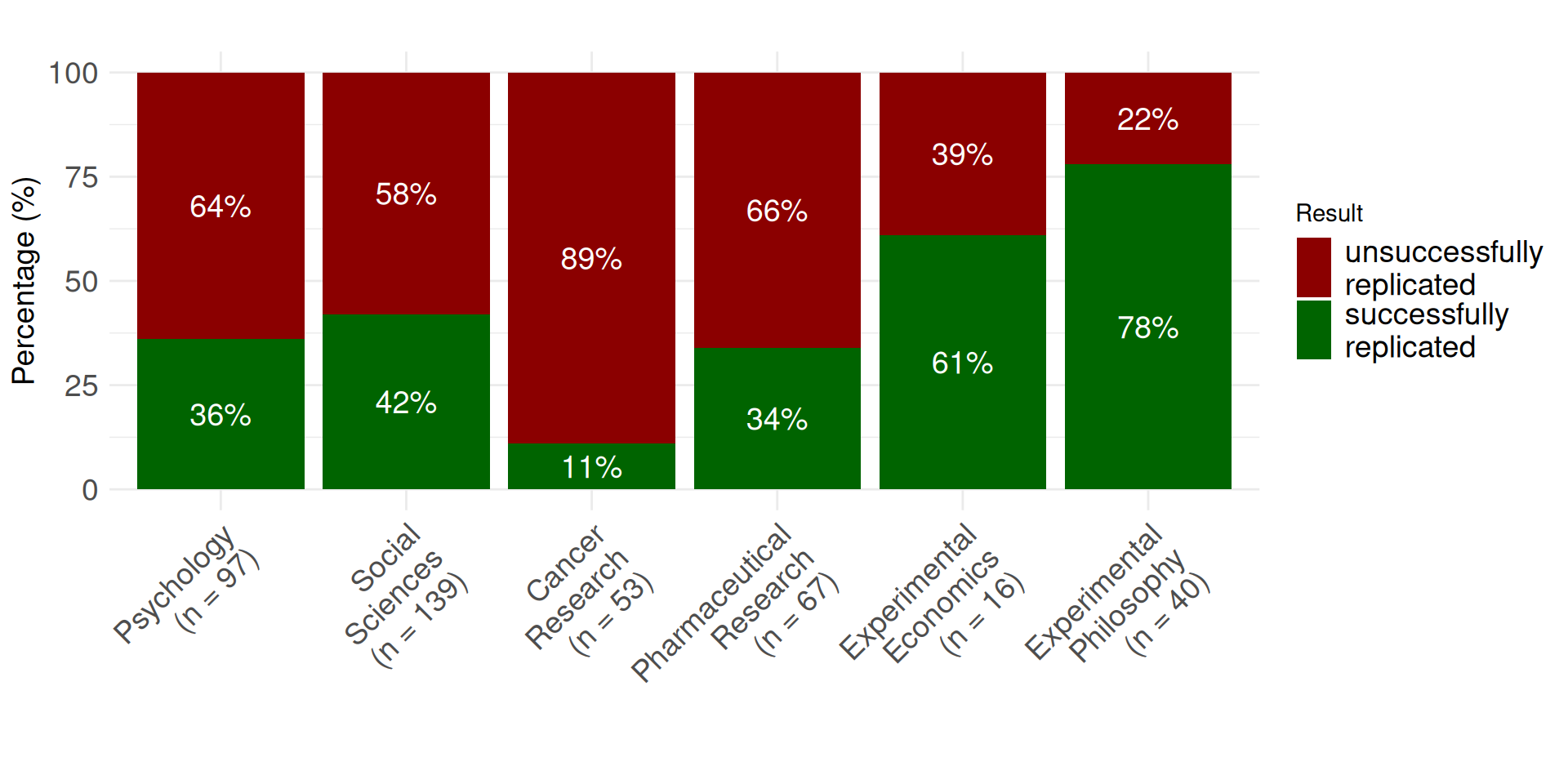

Replication rates across disciplines

Up to 2018

Begley, C. G., & Ellis, L. M. (2012); Camerer et al (2016); Chang & Li (2015); Cova et al. (2018); Open Science Collaboration (2015); Social Science: Combined sample of systematically sampled projects (RPP, SSRP, EERP); Prinz, F., Schlange, T., & Asadullah, K. (2011); Protzko et al. (2023)

Replication rates in the social and behavioural sciences

Tyner et al. (2026):

- Authors attempted replications of 274 claims of positive results from 164 quantitative papers (published between 2009 and 2018)

- ~55% of positive claims replicated successfully

| Discipline | Replication attempts (successful / total) | Percentage successful |

|---|---|---|

| Business | 17 / 36 | 47.2% |

| Economics | 10.2 / 24 | 42.5% |

| Education | 8.2 / 13 | 63.1% |

| Political science | 7.8 / 15 | 52.0% |

| Psychology | 28.4 / 58 | 49.0% |

| Sociology | 9.2 / 18 | 51.1% |

Tyner, S. K., Abatayo, A. L., Dayley, M., et al. (2026). Investigating the replicability of the social and behavioural sciences. Nature. https://doi.org/10.1038/s41586-025-10078-y

Not a recent issue

Adapted from Dr. Malika Ihle: https: https://osf.io/u3znx

Why did not more studies replicate?

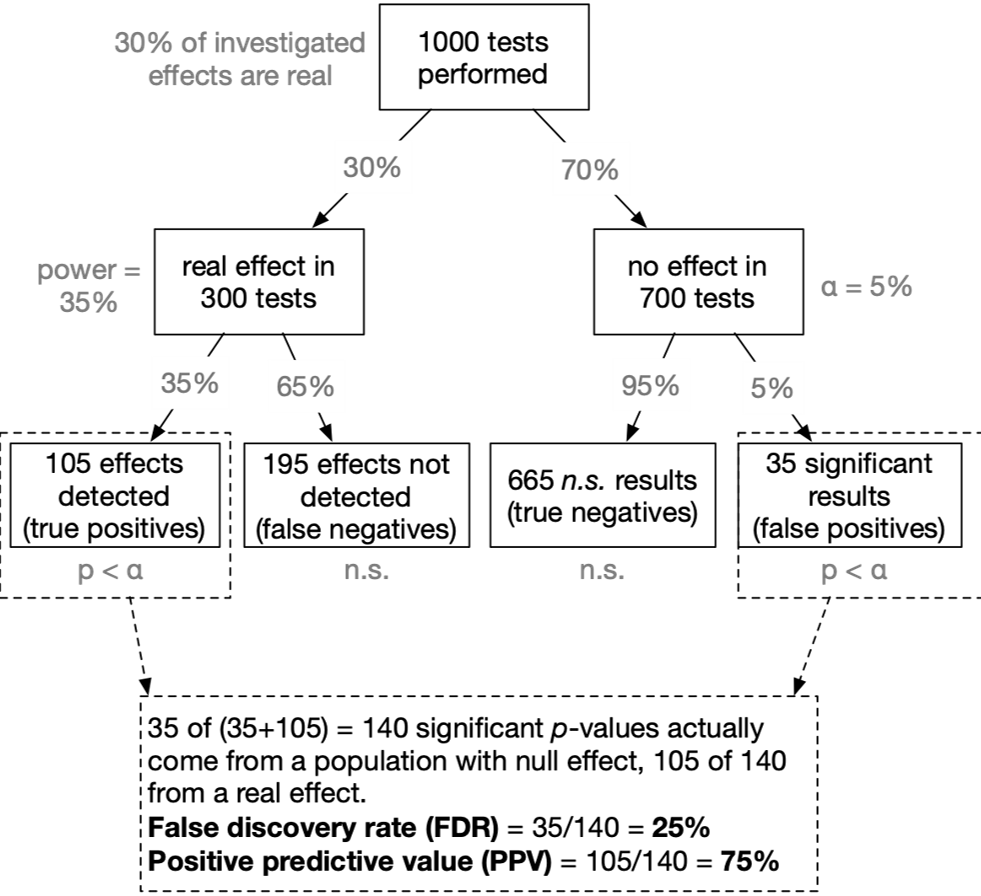

Low power and low p(H1)

Live Poll

Given that in a given study \(p < .05\): What is the probability that a real effect exists in the population ➙ \(p(H_1|D)\)?

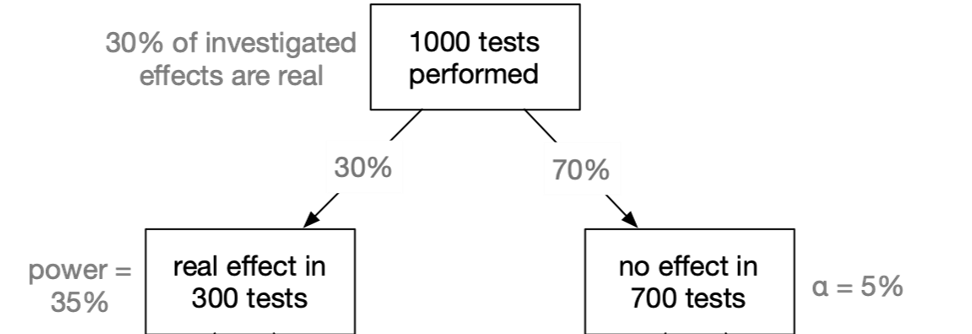

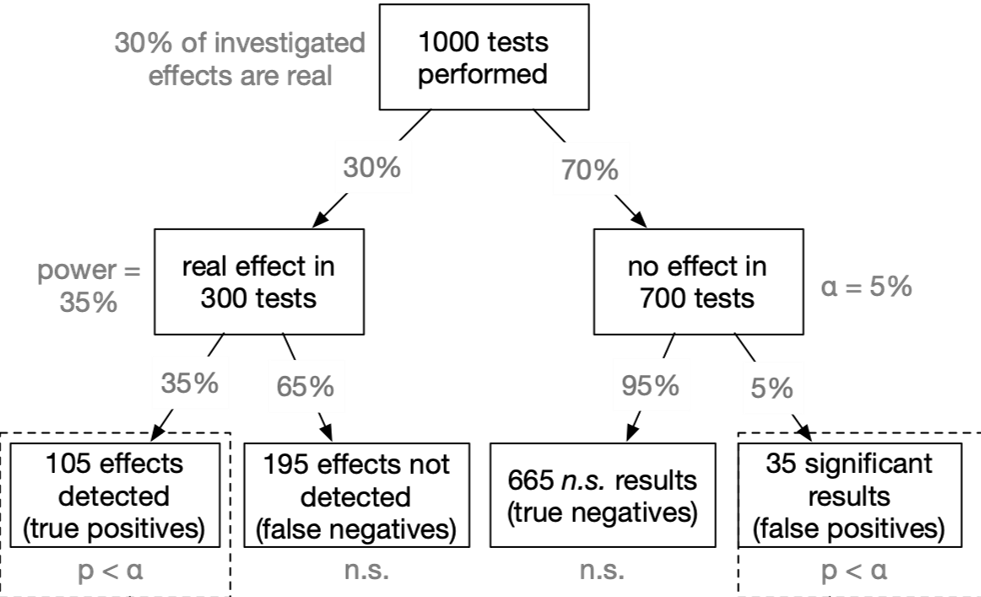

False Discovery Rate 1

Nuzzo, R. (2014). Statistical errors. Nature. Colquhoun, D. (2014). An investigation of the false discovery rate and the misinterpretation of p-values. Royal Society Open Science, 1(3), 140216–140216. http://doi.org/10.1073/pnas.1313476110

False Discovery Rate 2

Nuzzo, R. (2014). Statistical errors. Nature. Colquhoun, D. (2014). An investigation of the false discovery rate and the misinterpretation of p-values. Royal Society Open Science, 1(3), 140216–140216. http://doi.org/10.1073/pnas.1313476110

False Discovery Rate 3

Nuzzo, R. (2014). Statistical errors. Nature. Colquhoun, D. (2014). An investigation of the false discovery rate and the misinterpretation of p-values. Royal Society Open Science, 1(3), 140216–140216. http://doi.org/10.1073/pnas.1313476110

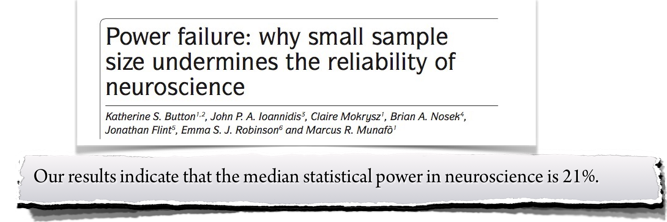

Applied to neuroscience

- Assumed that our tested hypothesis are true in 30% of all cases (which is a not too risky research scenario):

- A typical neuroscience study must “fail” (\(p > \alpha\)) in 90% of all cases

- In the most likely outcome of \(p > .05\), we have no idea whether a) the effect does not exist, or b) we simply missed the effect. Virtually no knowledge has been gained.

Button, K. S., Ioannidis, J. P. A., Mokrysz, C., Nosek, B. A., Flint, J., Robinson, E. S. J., & Munafò, M. R. (2013). Power failure: why small sample size undermines the reliability of neuroscience. Nat Rev Neurosci, 14(5), 365–376. doi:10.1038/nrn3475

The evidential strength of underpowered studies

“When a study is underpowered it most likely provides only weak inference. Even before a single participant is assessed, it is highly unlikely that an underpowered study provides an informative result.”

“Consequently, research unlikely to produce diagnostic outcomes is inefficient and can even be considered unethical. Why sacrifice people’s time, animals’ lives, and societies’ resources on an experiment that is highly unlikely to be informative?”

Schönbrodt, F. D. & Wagenmakers, E.-J. (2018). Bayes Factor Design Analysis: Planning for compelling evidence. Psychonomic Bulletin & Review, 25, 128-142. doi:10.3758/s13423-017-1230-y. Open Access

Conclusion

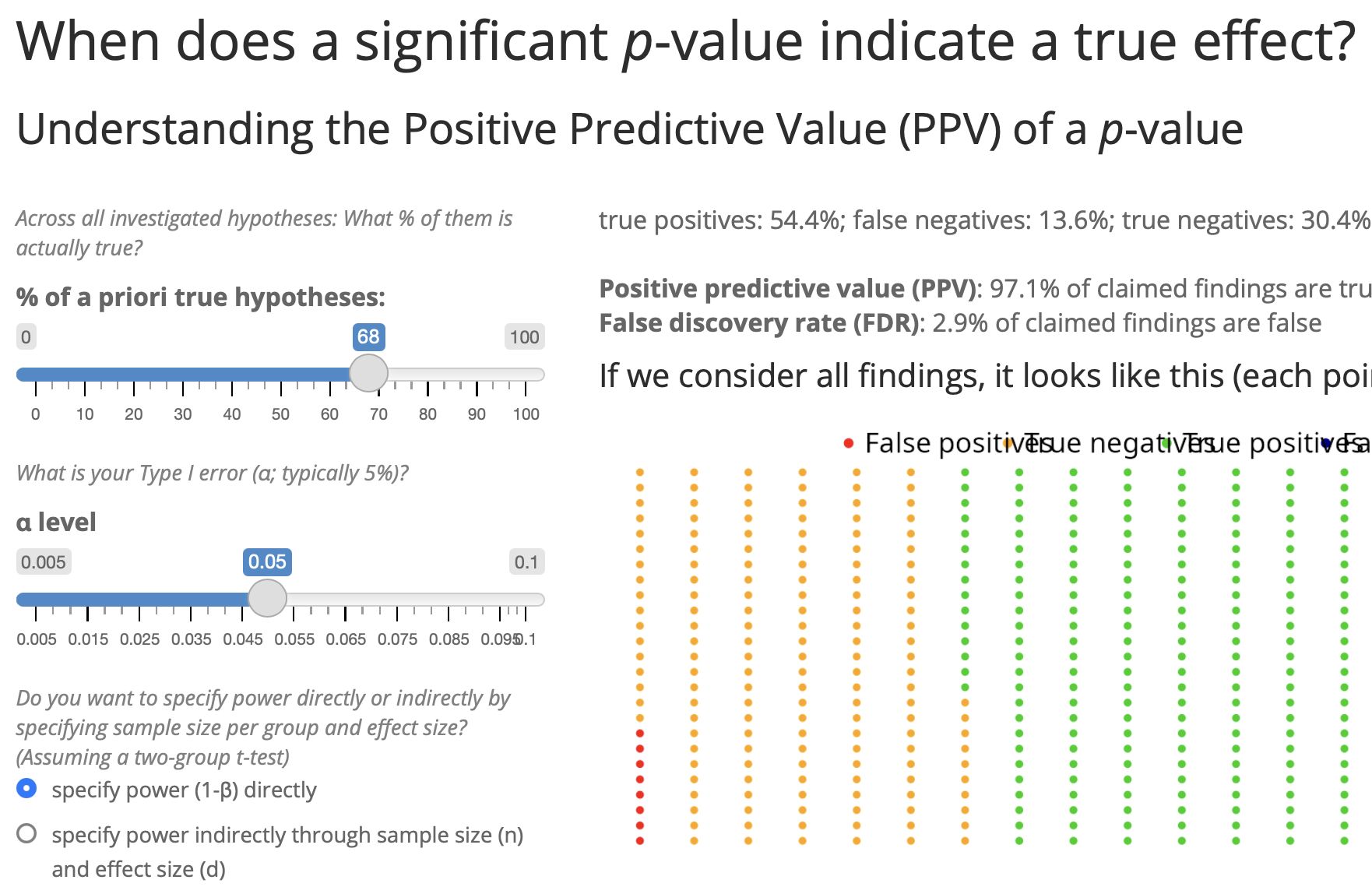

Given that in a given study \(p < .05\): What is the probability that a real effect exists in the population ➙ \(p(H_1|D)\)?

- → In the typical situation in psychology (\(p(H_1)\) = 10%; power = 35%): The probability that a significant p-value indicates a true effect is only 44%.

- Try it out yourself! https://shiny.psy.lmu.de/felix/PPV

Career incentives in science

How to become a professor?

- What is the single most important thing you need to become a professor?

- Survey among N = 1453 psychology researchers, 66% were actually members of a professorship hiring committee

| Actual (not desired) relevance in professorship hiring committees | Rank |

|---|---|

| Number of peer-reviewed publications | 1 |

| Fit of research profile to the hiring department | 2 |

| Quality of research talks | 3 |

| Number of publications | 4 |

| Volume of acquired third party funding | 5 |

| Number of first authorships | 6 |

Abele-Brehm, A. E., & Bühner, M. (2016). Wer soll die Professur bekommen? Psychologische Rundschau, 67(4), 250–261. http://doi.org/10.1026/0033-3042/a000335

How to become a professor?

Publication Pressure

“Researchers are not rewarded for being right, but rather for publishing a lot.”

Consequence: “Publish or Perish” culture

Researchers try to publish as much as they can and to outperform their peers (Schmidt et al., 2021).

Nelson, Simmons, & Simonsohn (2012); Nosek, Spies, Motyl (2012); Munafo (2016)

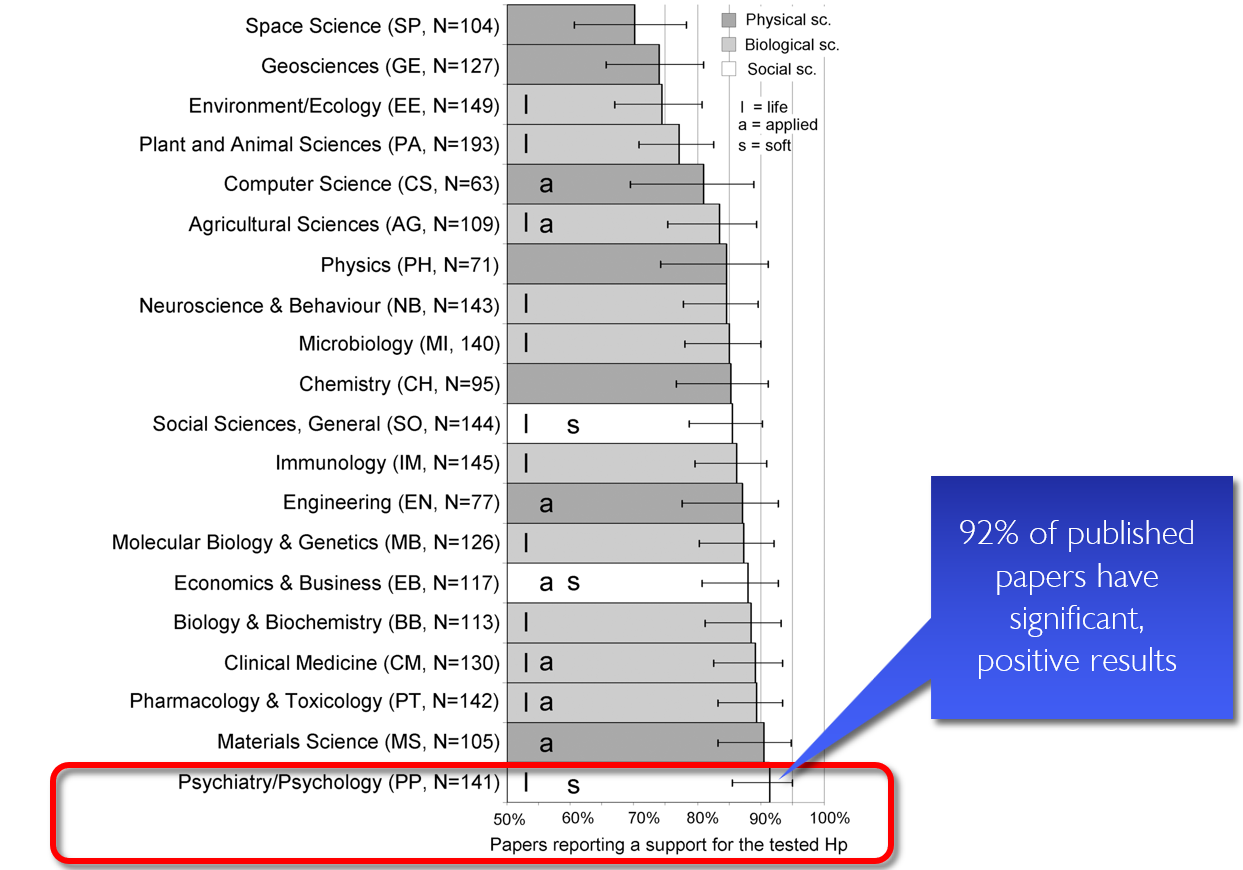

OK, I need a lot of publications …

🤔 How do I get lots of publications?

Fanelli, D. (2010). “Positive” results increase down the hierarchy of the sciences. PLOS ONE, 5, e10068. https://doi.org/10.1371/journal.pone.0010068

Publication bias

“If my study works, I can publish it. If it does not, let’s hide it the drawer.”

Song, F., Hooper, L., & Loke, Y. K. (2013). Publication bias: what is it? How do we measure it? How do we avoid it?. Open Access Journal of Clinical Trials, 71-81. https://doi.org/10.2147/OAJCT.S34419

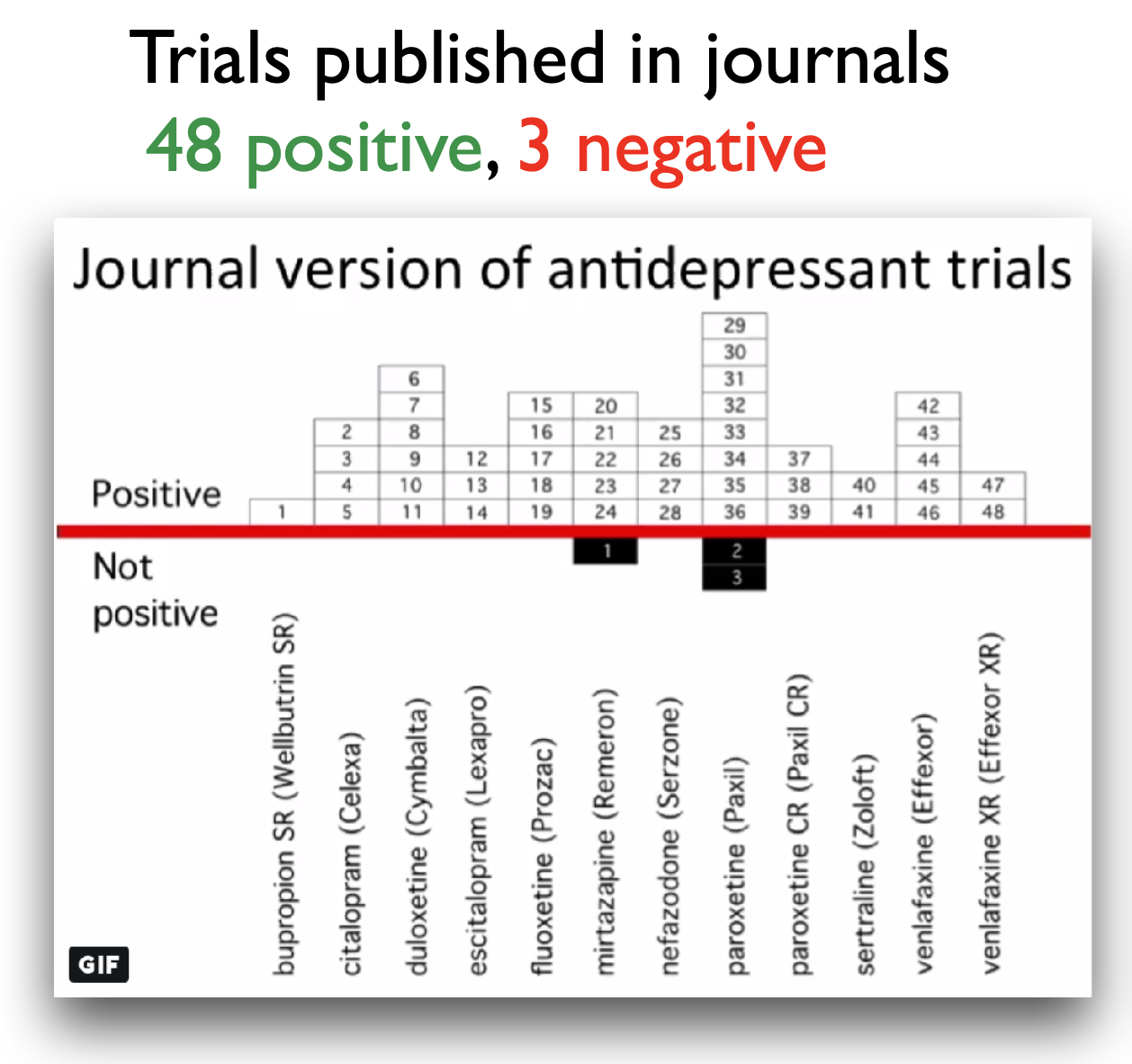

Example publication bias

Turner, E. H., Matthews, A. M., Linardatos, E., Tell, R. A., & Rosenthal, R. (2008). Selective publication of antidepressant trials and its influence on apparent efficacy. New England Journal of Medicine, 358(3), 252-260. https://doi.org/10.1056/NEJMsa065779

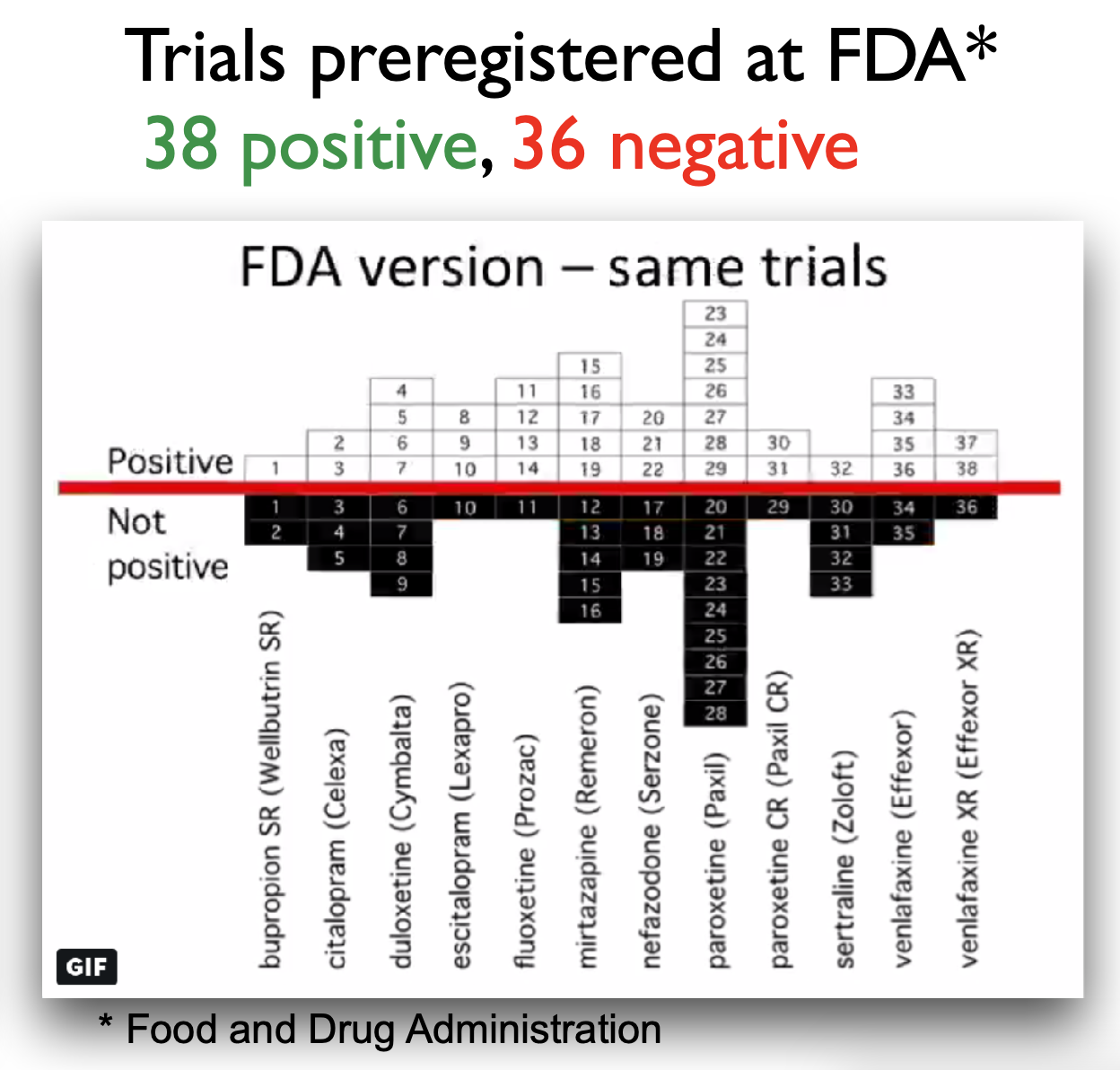

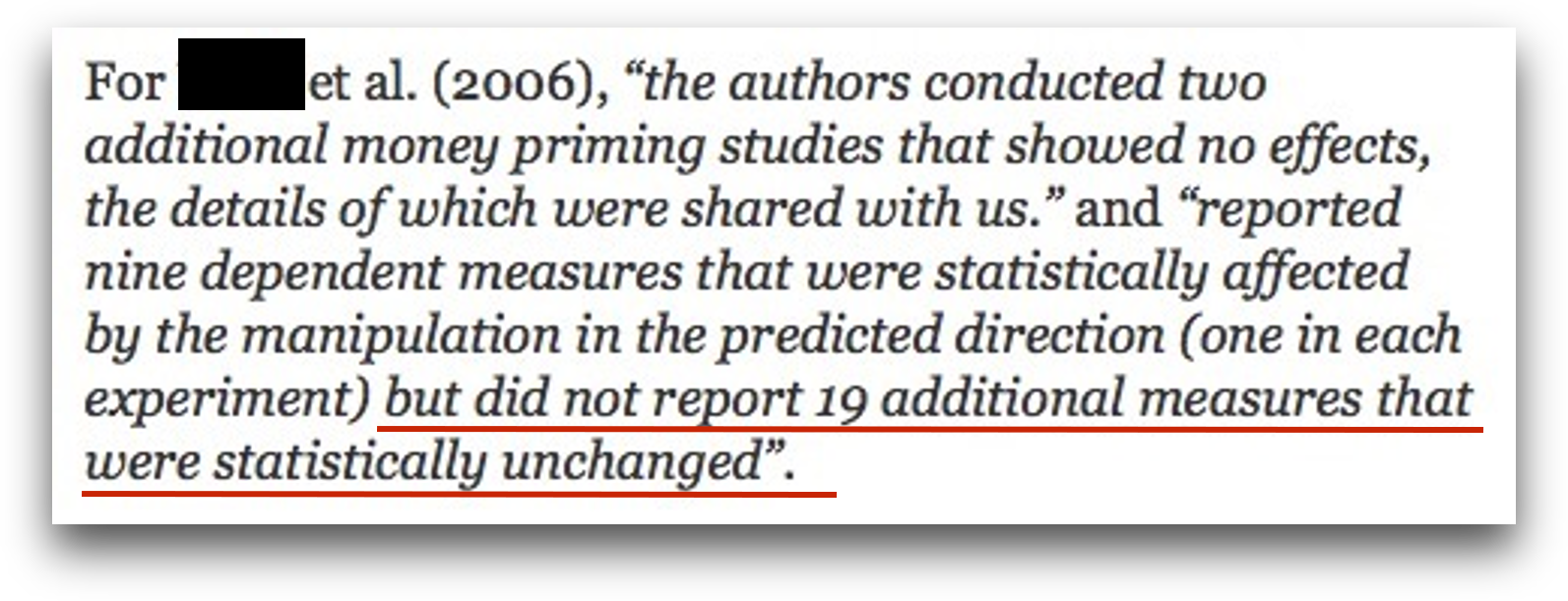

More than just publication bias

- All conducted studies

- Study publication bias: non-publication of an entire study

- Outcome reporting bias: non-publication of negative outcomes within a published article or switching the status of (non-significant) primary and (significant) secondary outcomes

- Spin: authors conclude that the treatment is effective despite non-significant results on the primary outcome

- Citation bias: Studies with positive results receive more citations than negative studies

De Vries, Y. A., Roest, A. M., de Jonge, P., Cuijpers, P., Munafò, M. R., & Bastiaansen, J. A. (2018). The cumulative effect of reporting and citation biases on the apparent efficacy of treatments: the case of depression. Psychological Medicine, 48(15), 2453–2455. https://doi.org/10.1017/S0033291718001873

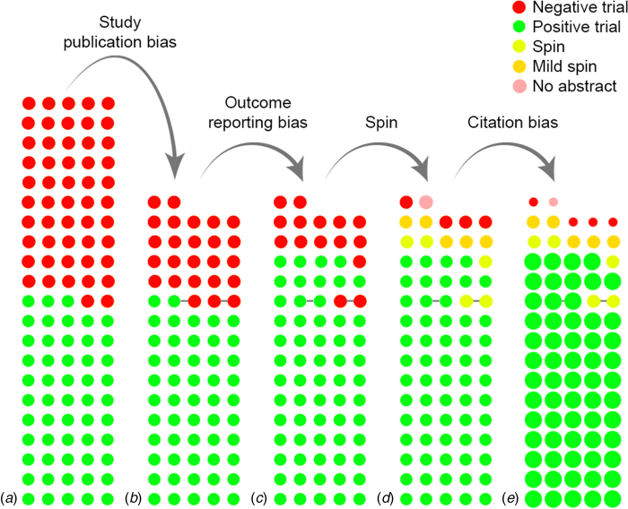

Confirmation Bias

a.k.a. “Thought so already” bias

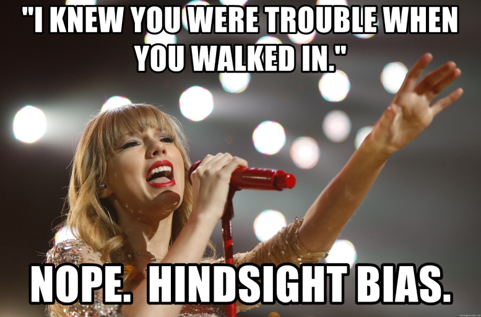

Hinsight Bias

a.k.a. “I knew it” bias

- The tendency to believe, after seeing the results of a study, that the outcome was obvious or predictable all along, even if it was not.

Practical exercise 4

Task: Match the bias to its description.

| Bias | Description |

|---|---|

| 1. Confirmation bias | A. Non-publication of negative outcomes within a paper, or switching non-significant primary outcomes with significant secondary ones. |

| 2. Spin | B. Studies with positive results receive more citations than negative studies. |

| 3. Study publication bias | C. After learning the outcome, believing “I knew it all along.” |

| 4. Hindsight bias | D. Non-publication of an entire study (e.g., trials with null results never submitted). |

| 5. Outcome reporting bias | E. Tendency to seek or interpret information in ways that confirm existing beliefs. |

| 6. Citation bias | F. Authors conclude the treatment is effective despite non-significant primary outcomes. |

Pre-break survey

Pre-break survey

Based on what you learnt so far, how would you now rate your trust in published scientific findings on a scale from 1 - 5? (1 = not trusting any of the findings, 2 = trusting only some findings, 3 = trusting about half of the findings, 4 = trusting the majority of the findings, 5 = trusting all findings)

1

2

3

4

5

Pre-break survey

What does replicability in research mean?

Obtaining the same results using the original dataset and code

Obtaining consistent results when a new study collects new data using the same methods

Publishing results in more than one journal

Repeating the statistical analysis multiple times

Pre-break survey

What is publication bias in research?

The tendency for journals to publish studies only from well-known researchers

The requirement that all published studies must be peer-reviewed

The practice of publishing the same study in multiple journals

The tendency for studies with positive results to be published more often than studies with non-significant or negative results

Break! 15 minutes

Post-break survey discussion

What do we see in the results?

p-hacking and other problems …

Warning

Do not try the following at home.

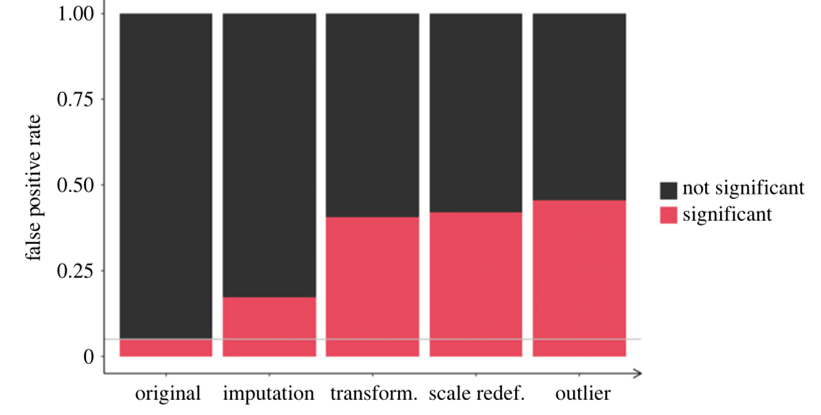

“Hack” 1: Add lots of outcome variables

- For two outcome variables: False positive rate increases from 5% to 9.5%

- For five outcome variables (and one-sided testing): False positive rate increases from 5% to 41%

“Hack” 2: Run as many comparisons as possible

- Run as many different comparisons on different outcomes, subgroups, time windows etc. as you can

- Only report the comparisons that produced a statistically significant result

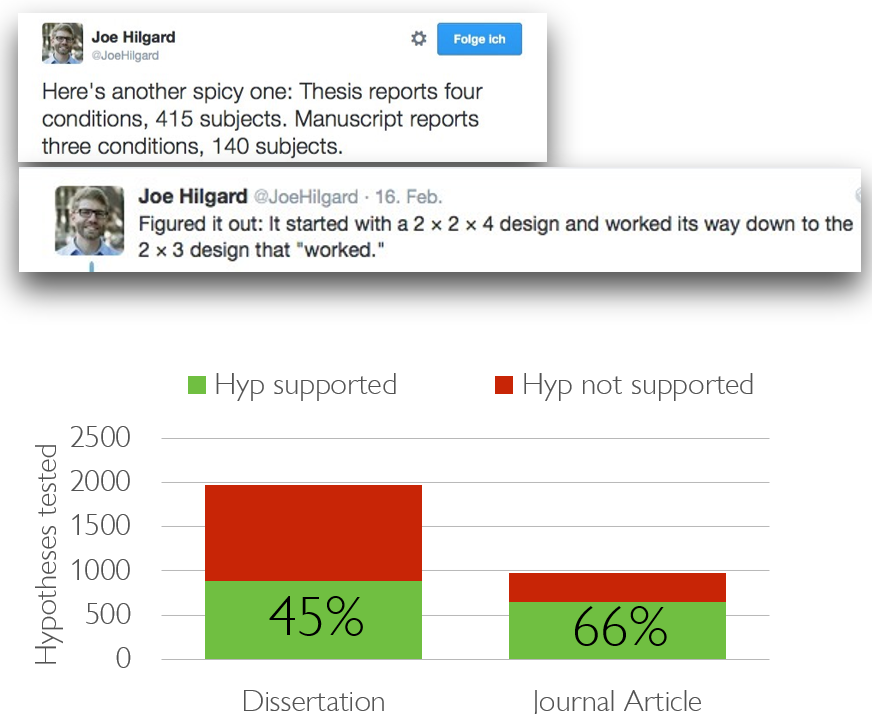

O’Boyle, E. H., Banks, G. C., & Gonzalez-Mulé, E. (2017). The Chrysalis Effect: How ugly initial results metamorphosize into beautiful articles. Journal of Management, 43(2), 376–399. https://doi.org/10.1177/0149206314527133

“Hack” 2: Run as many comparisons as possible

Example: Subgroup Analyses

Research question: Do aggressive primes trigger aggressive behavior?

“A second study in Turner, Layton, and Simons (1975) collects a larger sample of men and women driving vehicles of all years. The design was a 2 (Rifle: present, absent) × 2 (Bumper Sticker:”Vengeance”, absent) design with 200 subjects.”

➙ presumably, no effect … (yet! Do not give up so easily)

Cited from Joe Hilgard’s excellent blog post on the Weapon Priming Effect; Turner et al. (1975). Naturalistic studies of aggressive behavior: aggressive stimuli, victim visibility, and horn honking. JPSP, 31(6), 1098–1107.

“Hack” 2: Run as many comparisons as possible

Example: Subgroup Analyses

“They divide this further by driver’s sex and by a median split on vehicle year. They find that the Rifle/Vengeance condition increased honking relative to the other three, but only among newer-vehicle male drivers, F(1, 129) = 4.03, p = .047. But then they report that the Rifle/Vengeance condition decreased honking among older-vehicle male drivers, F(1, 129) = 5.23, p = .024! No results were found among female drivers.”

Cited from Joe Hilgard’s excellent blog post on the Weapon Priming Effect; Turner et al. (1975). Naturalistic studies of aggressive behavior: aggressive stimuli, victim visibility, and horn honking. JPSP, 31(6), 1098–1107.

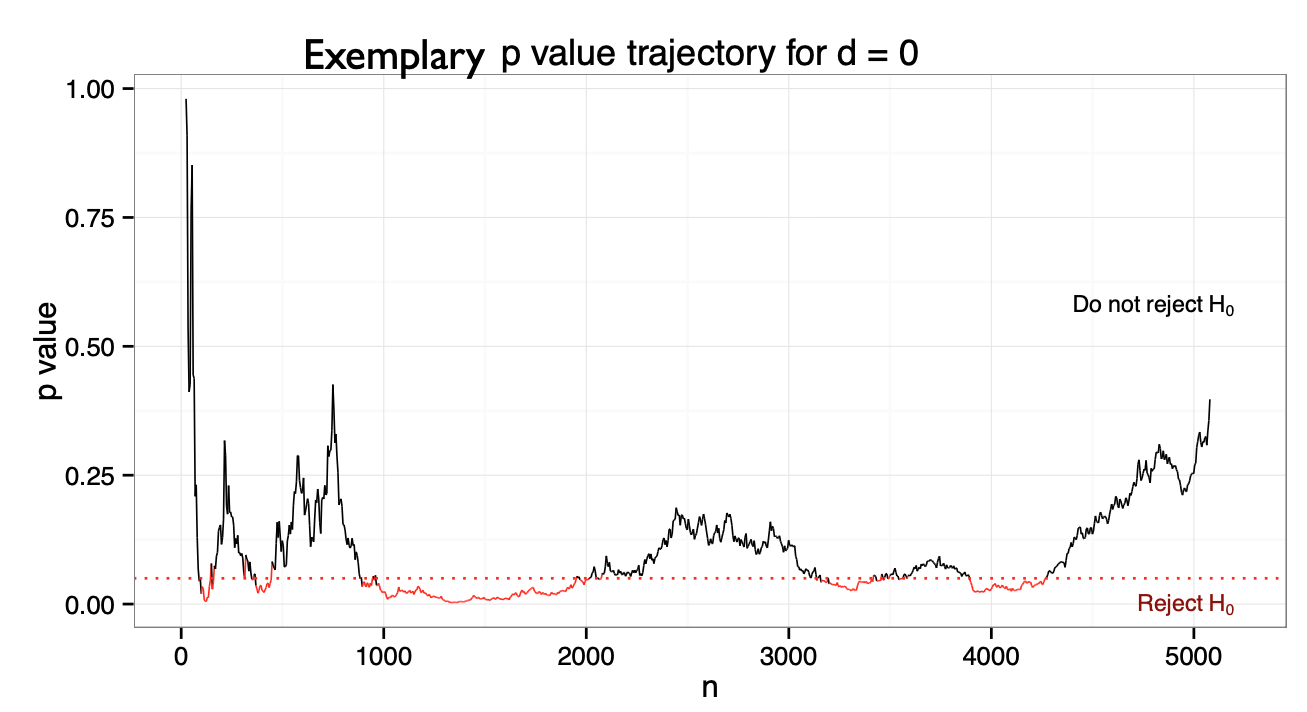

“Hack” 3: Optional stopping

- Scenario: You collect a sample of n=60 and obtained a p-value of .07.

- “Trending towards significance”! What is your intuition how to proceed?

“Hack” 3: Optional stopping

- Optional stopping: Collect an initial sample, analyze the results, add participants if the results are not significant

- But under \(H_0\), the p-value is a random walk between 0 and 1, fluctuating forever.

- Eventually, it will “dip” below the critical threshold

- The false positive rate depends on the number of optional sample size increases:

- One analysis: α = 5%

- Two analyses: α = 11%

- But with enough increases can be pushed to 100%!

Armitage, P., McPherson, C. K., & Rowe, B. C. (1969). Repeated significance tests on accumulating data. Journal of the Royal Statistical Society. Series A (General), 132, 235–244.

“Hack” 4: Drop participants you do not like

- Selectively exclude data/ outliers after seeing the results until the results are satisfying (aka until significance has been reached)

“Hack” 5: HARK-ing

- Hypothesizing After Results are Known = presenting an exploratory finding to match a hypothesis that was created only after analysing the results

- a.k.a. “The Texas sharpshooter fallacy”

Combining these “hacks”

- Combining some of these hacks (aka questionable research practices) can raise false positive rates from 5% to > 50%!

- With p-hacking, the logic of the p-value is corrupted and “renders the reported p-values essentially uninterpretable.”

A shocking report from an experienced researcher

A note to remember

Warning

The so-called “hacks” on the past few slides represent questionable research practices. Do not try at home.

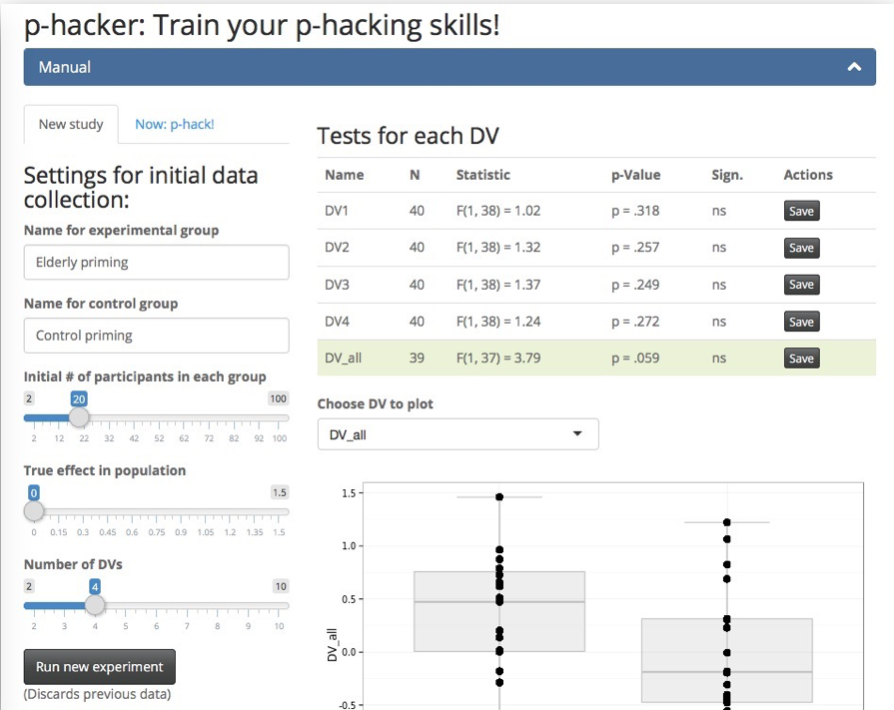

Practice p-hacking yourself

with the P-Hacker App

http://shinyapps.org/apps/p-hacker/

Practical exercise 5

Scenario: You are reviewing a study examining whether drinking white tea improves short-term memory, where the researchers report:

Hypothesis: White tea improves memory test scores.

Sample size: 28 participants per group (tea-drinkers vs. water-only-drinkers)

Results:

- Effect was “stronger in women” (p = 0.049)

- Effect “even stronger when excluding two outliers” (p = 0.044)

- No effect in men (p = 0.31)

- Reaction time difference significant (p = 0.046)

Conclusion: The results show that white tea reliably improves cognitive performance.

Which potential p-hacking strategies are at play here?

Practical exercise 5

Scenario: You are reviewing a study examining whether drinking white tea improves short-term memory, where the researchers report:

Hypothesis: White tea improves memory test scores.

Sample size: 28 participants per group (tea-drinkers vs. water-only-drinkers)

Results:

- Effect was “stronger in women” (p = 0.049)

- Effect “even stronger when excluding two outliers” (p = 0.044)

- No effect in men (p = 0.31)

- Reaction time difference significant (p = 0.046)

Conclusion: The results show that white tea reliably improves cognitive performance.

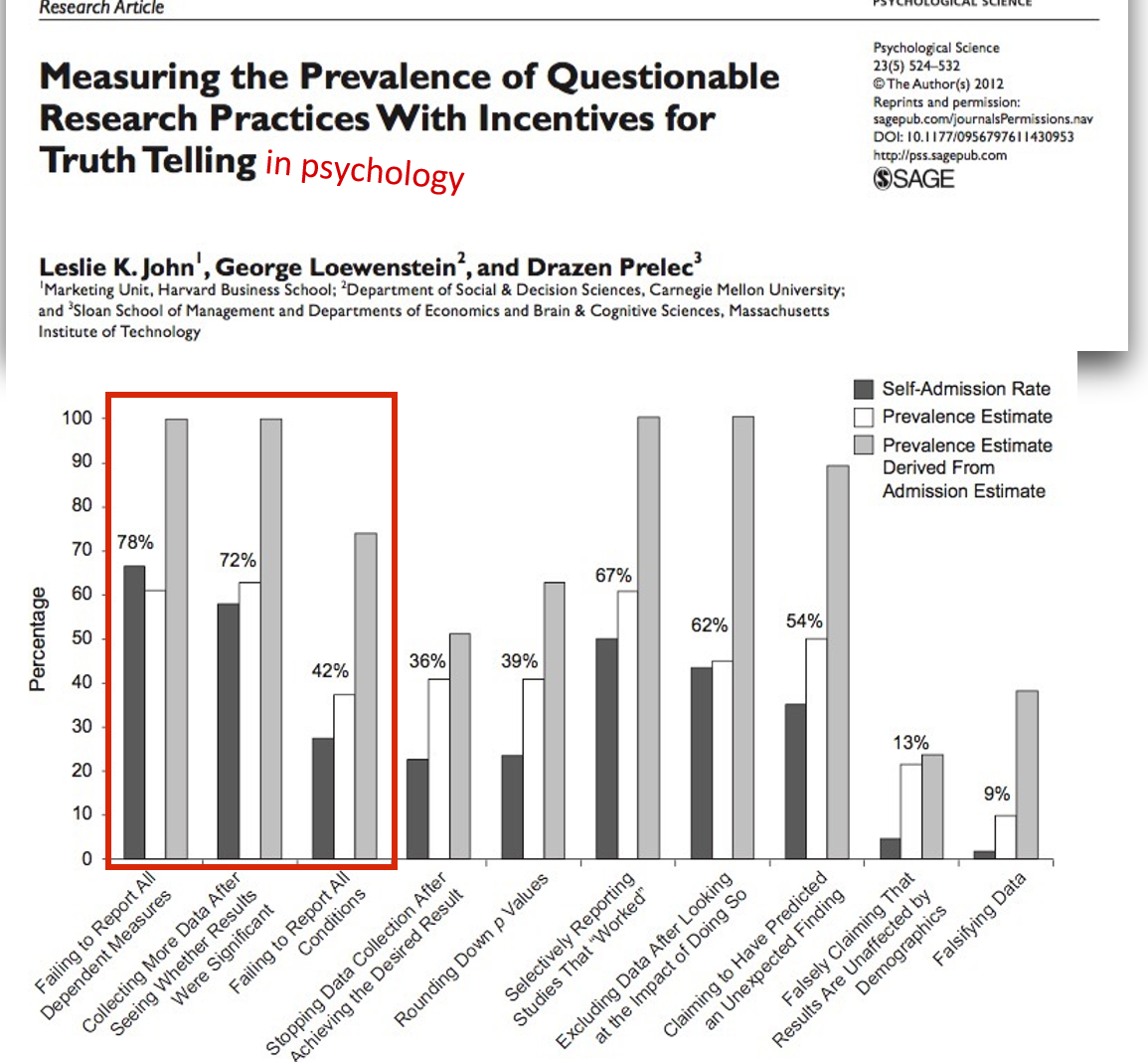

Does this actually happen?

Come on. Surely not..? 😳

John, L. K., Loewenstein, G., & Prelec, D. (2012). Measuring the prevalence of questionable research practices with incentives for truth telling. Psychological science, 23(5), 524-532.

.. and across fields?

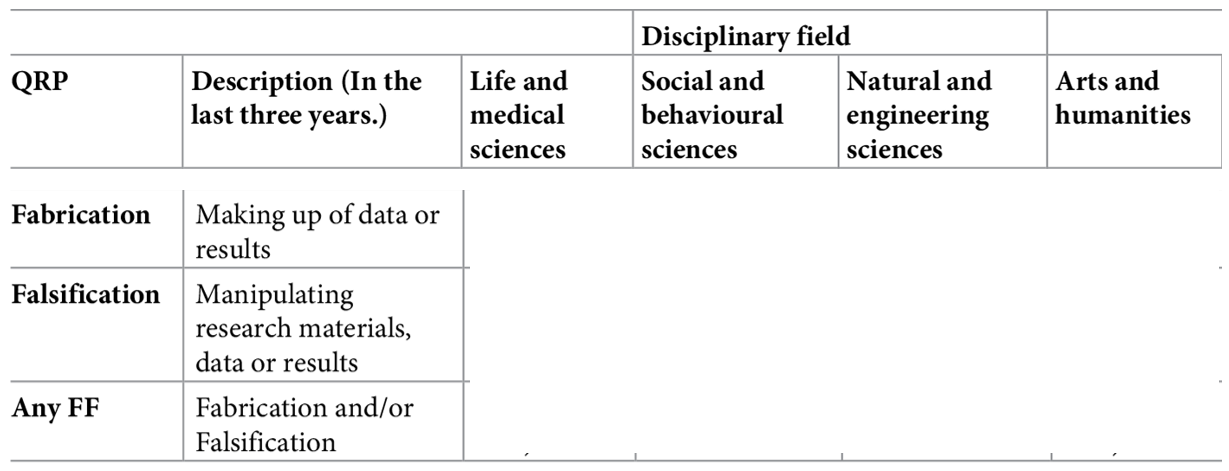

- Survey among 6,813 academic researchers in The Netherlands: Self-reported prevalence of fabrication and falsification in the last 3 years

Gopalakrishna, G., ter Riet, G., Vink, G., Stoop, I., Wicherts, J. M., & Bouter, L. M. (2022). Prevalence of questionable research practices, research misconduct and their potential explanatory factors: A survey among academic researchers in The Netherlands. PLOS ONE, 17(2), e0263023. https://doi.org/10.1371/journal.pone.0263023

.. and across fields?

- Survey among 6,813 academic researchers in The Netherlands: Self-reported prevalence of fabrication and falsification in the last 3 years

Gopalakrishna, G., ter Riet, G., Vink, G., Stoop, I., Wicherts, J. M., & Bouter, L. M. (2022). Prevalence of questionable research practices, research misconduct and their potential explanatory factors: A survey among academic researchers in The Netherlands. PLOS ONE, 17(2), e0263023. https://doi.org/10.1371/journal.pone.0263023

(Un)Intentional?

- Intentional?

- “Evil researcher” who only cares about his/her career and not at all about truth-seeking?

- „We urge the social science community to redefine p-hacking as a series of deceptive research practices rather than ones that are merely questionable.“ (Craig et al., 2020)

- Unintentional?

- Lack of education/knowledge?

- Wrong/uncritical standards of the field?

- Pushed by supervisors, reviewers, or editors? ➙ http://bulliedintobadscience.org/

- Simply being human?

- Distorting effects on the published record are probably comparable, but the ethical evaluations differs strongly.

Honest Errors

Human errors and honest mistakes

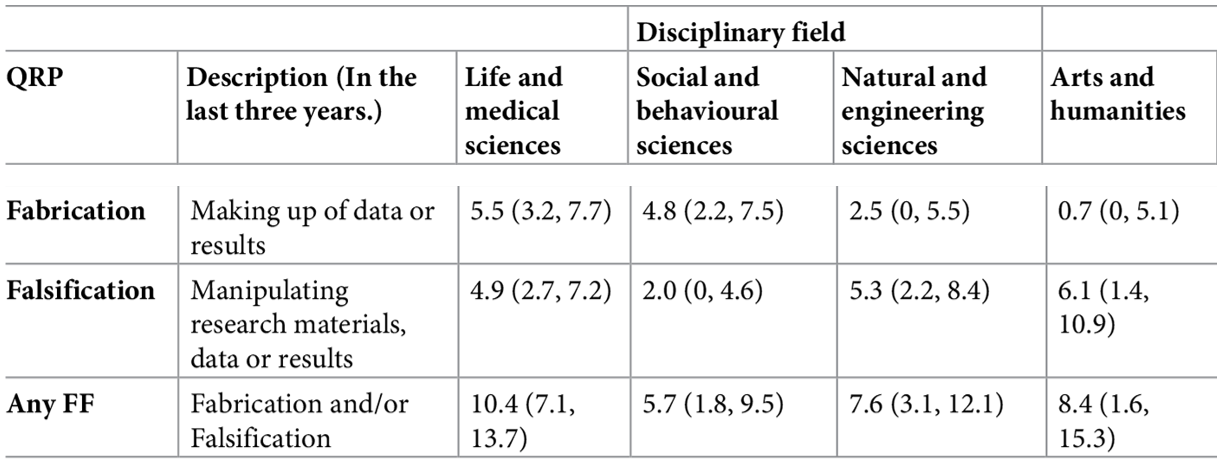

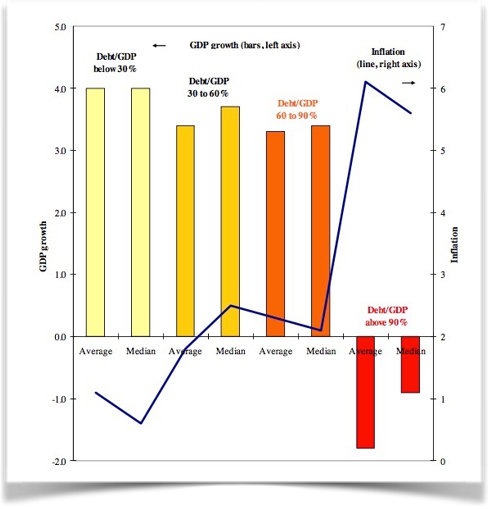

“90% Excel-Gate”

Human errors and honest mistakes

“90% Excel-Gate” - Lessons learned

Important

The most important point of the story: The original authors shared their raw data, which made it possible to correct the honest mistake!

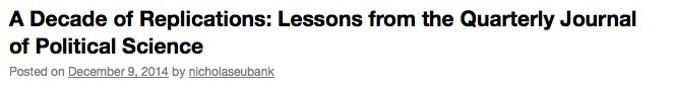

Statistical errors

- Reproducible analysis code and open data required at submission - “in-house checking” in review process

- 54% of all submissions had results in the paper that did not match the computed results from the code

- Wrong signs, wrong labeling of regression coefficients, errors in sample sizes, wrong descriptive stats

Eubank, N. (2016). Lessons from a decade of replications at the quarterly journal of political science. PS: Political Science & Politics, 49(2), 273-276 https://doi.org/10.1017/S1049096516000196

Statistical inconsistencies

Statistical inconsistencies

- Does the statistical conclusion change? ➙ „strong/gross inconsistency“

- Psychology:

- 16,695 scanned papers with statcheck tool (Nuijten et al., 2015)

- 50% of papers contain statistical inconsistencies

- 13% contained strong errors (i.e., where the statistical conclusion changes).

- Economics

- 3,677 scanned papers with DORIS tool (Bruns et al., 2023)

- 36% of papers contain statistical inconsistencies

- 15% contained strong errors (i.e., where the statistical conclusion changes).

- Other fields (e.g. Sociology):

- Not even (automatically) checkable, due to unstandardized reporting practices.

- … and these are only the detected errors of one type (stat. inconsistency)!

Nuijten et al. (2016). The prevalence of statistical reporting errors in psychology (1985–2013). Behavior research methods, 48(4), 1205-1226. https://doi.org/10.3758/s13428-015-0664-2. Bruns et al.(2023). Statistical reporting errors in economics [Preprint]. MetaArXiv. https://doi.org/10.31222/osf.io/mbx62

Reproducibility Success

- Crüwell et al. (2022), Psychology: Numerical results of less than 30% of papers can be reproduced (Crüwell et al., 2022)

- Krähmer et al. (2026), Sociology:

- Only 3% of authors share analysis code proactively

- Upon request, 34% shared their code.

- Of these, 51% were perfectly reproducible.

- If non-shared cases are classified as non-reproducible, 18% of results reproduced.

What does this all mean?

Bias + (maybe unintentional) p-hacking + human (honest) mistakes = untrustworthy research findings?

Note

- Published findings across fields to be viewed with caution?

- “We know” –> “We think we know”?

- More research to verify existing “truths”?

A romanticized idea of research?

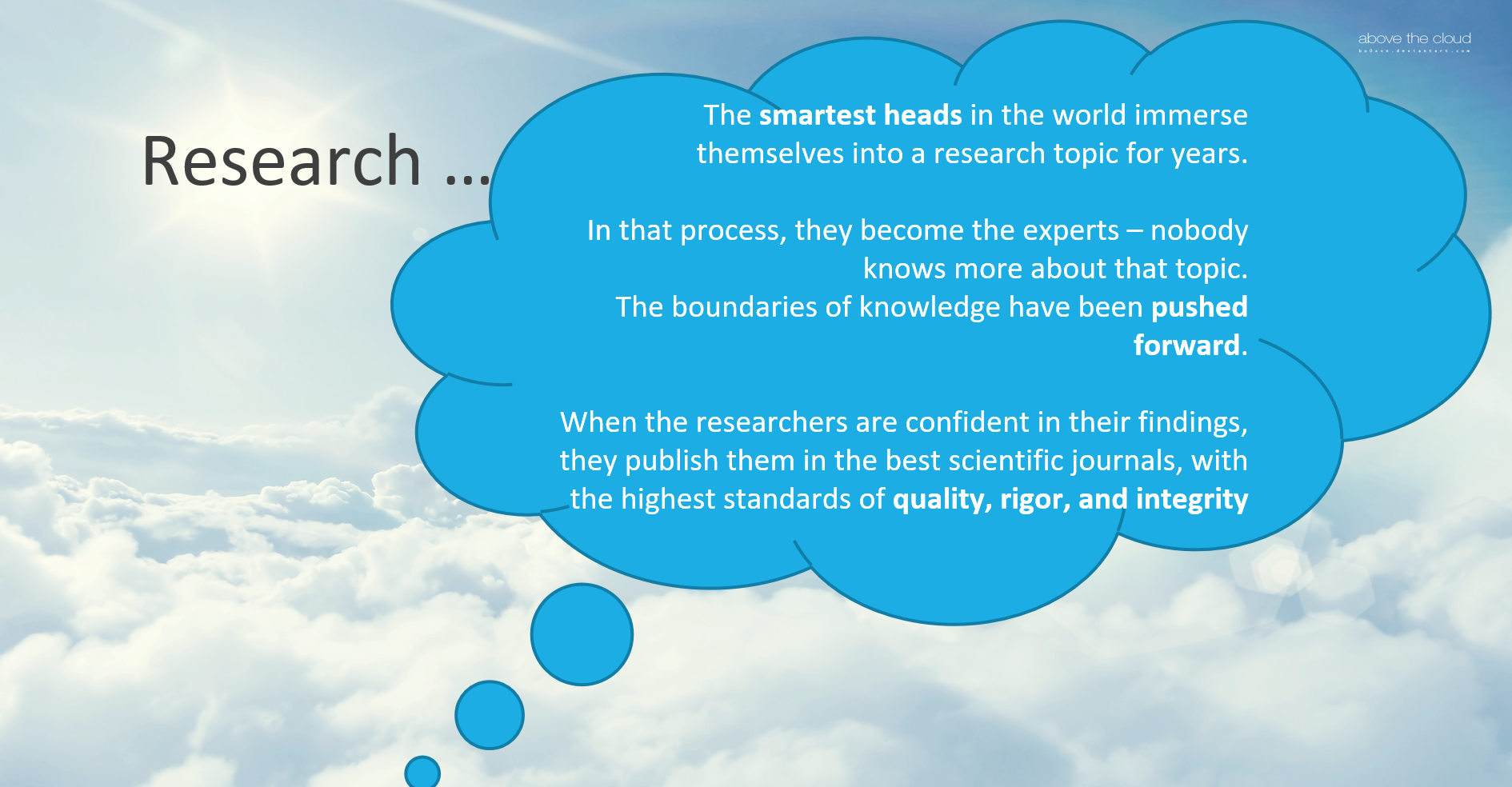

Going even further: The perpetuating role of AI

Emsley, R. (2023). ChatGPT: These are not hallucinations - they’re fabrications and falsifications. Schizophrenia, 9(1), 52. Lautrup, A. D., Hyrup, T., Schneider-Kamp, A., Dahl, M., Lindholt, J. S., & Schneider-Kamp, P. (2023). Heart-to-heart with ChatGPT: the impact of patients consulting AI for cardiovascular health advice. Open Heart, 10(2). Shekar, S., Pataranutaporn, P., Sarabu, C., Cecchi, G. A., & Maes, P. (2025). People Overtrust AI-Generated Medical Advice despite Low Accuracy. NEJM AI, 2(6), AIoa2300015.

Now what?

This image was taken from the Geograph project collection. The copyright on this image is owned by Chris Martin and is licensed for reuse under the Creative Commons Attribution-ShareAlike 2.0 license.

A new way of doing research

Open Research

aka

A scientific framework for the 21. century

To be continued …

Reflection activity

One-minute paper: Imagine you would have to explain the current challenges in research you heard about today to a friend. Write down what you would say to them.

Take-home message

What are you taking away from today?

Take-home message

What are you taking away from today?

Remember: There are solutions!

Research is not “doomed” - on the contrary. More on this in the next session!

Thanks!

See you next class :)

Additional exercises: Replicability and reproducibility

Decide whether each scenario in the following slides is an example of reproducibility or replicability.

Scenario 1

A computational neuroscientist reruns a published fMRI analysis using the original dataset and Python scripts to verify the reported brain activation patterns.

Scenario 2

An environmental scientist repeats a field experiment on soil nutrient levels using the same sampling protocol at a different site.

Scenario 3

A linguist reanalyzes a corpus of historical texts using the same annotation guidelines and code to verify reported patterns of syntactic structures.

Scenario 4

A psychology lab replicates a social behavior experiment using new participants from a different cultural background.

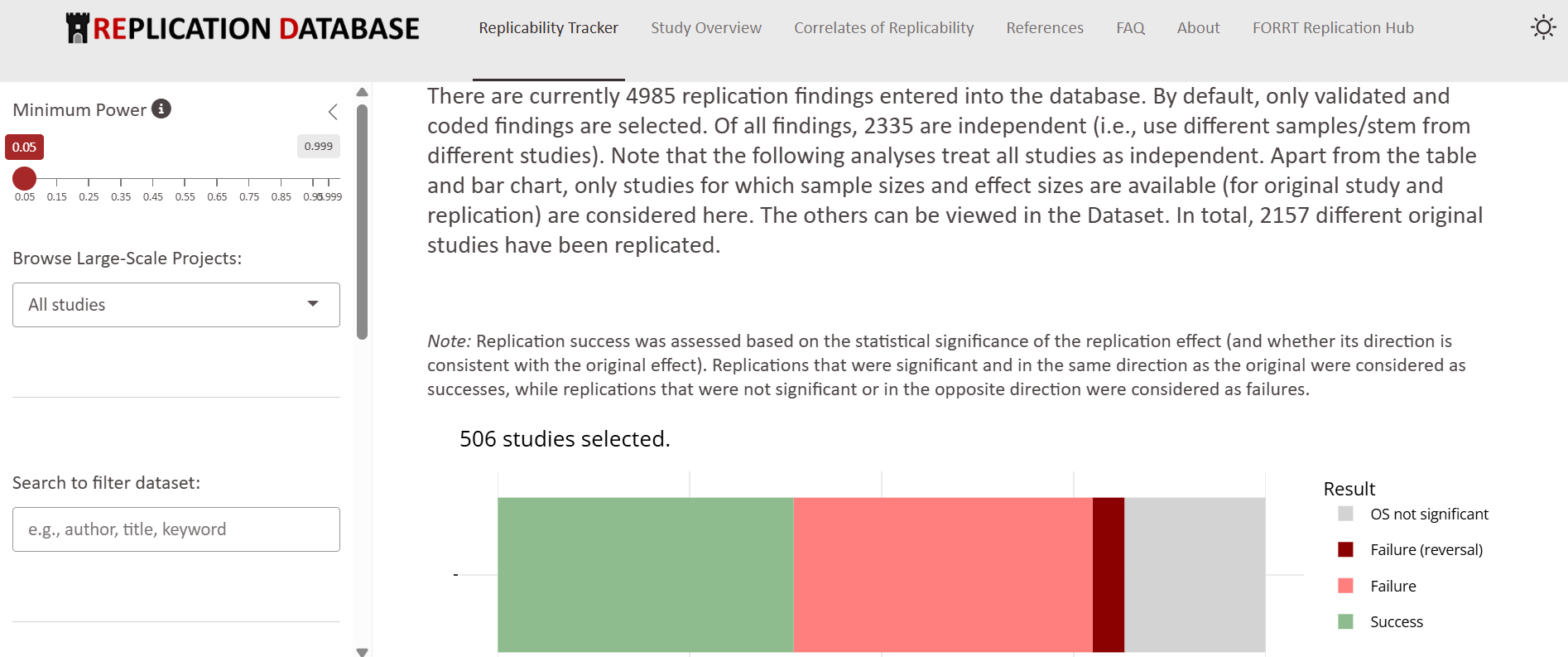

Psychology: The Replication Database by FORRT

- Collects replication results across different psychological fields

- Provide detailed overview of the original findings and the replication outcomes

FORRT Replication Database (https://forrt-replications.shinyapps.io/fred_explorer/)

References

Additional slides TO REMOVE?

What published research can be replicated/reproduced? REMOVE

| Field | Success | Failure |

|---|---|---|

| OSC (2015) – Psychology | 36% | 64% |

| Chang & Li (2015) – Economics (67 papers, 29 papers replicated) | 43% | 57% |

| Camerer 2016 – Econ laboratory | 61% | 39% |

| Camerer combined Social Sci | 62% | 38% |

| Begley & Ellis (2012) – Cancer Research | 11% | 89% |

| Prinz et al. (2011) – Pharmaceutical research | 35% | 65% |

| Cova et al. (2018) – x-philosophy | 70% | 30% |

| Protzko et al. (2023) – Social | 86% | 14% |

Why should we trust researchers?

…right? MOVE

Decide where to add - maybe not needed?

Ideal scenario: Balancing the desire to stay truthful to research with the necessity to publish?

LMU Open Science Center